Saliency and Attention: MUltimodality, context-awaReness, self-Adaptation and bio-Inspiration

Abstract:

Our knowledge of the world is shaped by human perception. Our sensory and motor capabilities allow us to understand and interact with reality. Cognition is the result of these interactions. Mimicking such brain functions is one of the most challenging scientific endeavours technologist have currently embraced with the name of cognitive computation, aiming at building biologically inspired intelligent machines.

Saliency is a key cognitive mechanism that prioritizes particular stimuli over others: our brain takes decisions about what is relevant or not in every particular situation in the process of exploring the world.

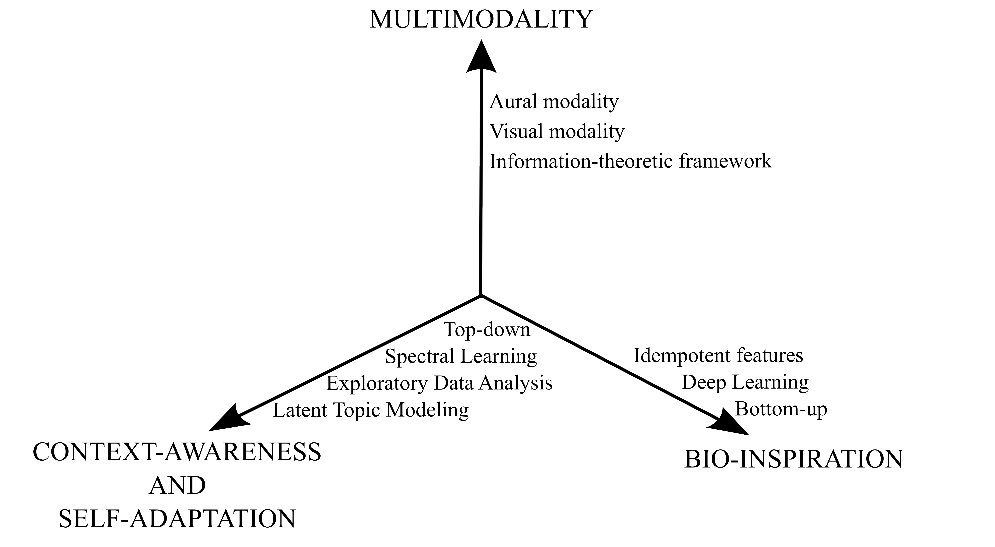

From a research perspective we identify the following key directions for advancing this technology:

-

- Multimodality: humans cannot conceive the world using a single modality. Yet most research results specialize on a particular one and have a limited understanding of others. Based on our experience we propose an integration of the two main human modalities: aural and visual. This integration pivots around two main conceptions: first, taking an information-theoretic based perspective for evaluation improves the interpretability of the results and is expected to provide a helpful metric for the fusion and second, an understanding of the role of time.

-

- Bio-inspiration: deep learning algorithms have had a profound impact in a large number of computational tasks and have also been employed for building models of visual saliency mainly for fusing maps based on different features. However, up to our knowledge, its application to aural saliency has not been explored. Mathematical morphology has also proven to be an advantageous tool to mimick psychoacoustical properties of the human auditory system.

- Context-awareness and self-adaptation: in contrast with the abundant literature about visual bottom-up saliency (the one based on low-level features or stimuli) the modeling of top-down visual attention still remains an open problem since its solutions are, in general, task-dependent. We aim at integrating bottom-up and top-down models by adopting a general framework where user goals and their relationship with low-level stimuli can be learnt and adapted for a particular context (task, individual, environmental, etc.). The capability of acquiring knowledge through the discovery of latent classes, topics, tasks or events together with the adoption of exploratory based analysis guided by experts is our proposal to contribute in this area.

From a methodological point of view, we adopt an end-user perspective since knowledge of the perceptual relevance of audio-visual items can be applied to several problems: e.g. object recognition, action classification or event detection. This, not only involves developing algorithms that incorporate saliency, but also changing the evaluation protocol, moving from the traditional evaluation that assesses the alignment of saliency maps and human fixations to a more meaningful one.

Under this conceptual framework, the purpose of this project is double-fold: first, to contribute to the advance the technology in each of the previous three directions and second, to develop a set of multi-purpose computational tools ready to be assembled into different applications such as event detection, object recognition, video annotation and indexing, personalized information retrieval or recommender systems, bio-imaging based diagnosis, healthcare, etc.

Figure 1. Conceptual Axes of the project

Conclusions:

El proyecto SAMURAI tenía como objetivo principal entender un mecanismo cognitivo básico para la supervivencia del ser humano: la atención. Se trata de un mecanismo por el cual priorizamos ciertos estímulos frente a otros: nuestro cerebro toma decisiones sobre qué es relevante y qué no en cada situación en su proceso de exploración del mundo. La metodología que hemos utilizado para comprender este mecanismo es la de construir modelos computacionalesdel mismo siguiendo la filosofía de Feyman de que “lo que no puedo crear, no lo entiendo”.

Comprendiendo que este fenómeno es genuinamente multimodal hemos trabajado principalmente en dos modalidades: la visual y la aural.

En el primer caso, el estado del arte se encuentra mucho más avanzado principalmente por la disponibilidad de sensores (eye-trackers) capaces de registrar las posiciones (fijaciones oculares) a las que prestamos más atención. Así, en este caso, hemos sido capaces de distinguir el mecanismo de saliencia (bottom-up) y el más general de atención (top-down, o mediado por la tarea u objetivo del sujeto) y proponer unsistema jerárquico basado en el empleo de modelos de tópicos latentes que realiza un mapeo entre estímulos y características de bajo nivel y la atención dirigida a la realización de tareas. En particular, este mapeo se hace a través de una capa intermedia (los tópicos latentes) que representa sub-tareas de especial interés para modelar la atención en escenarios específicos, en los que un sujeto desea resolver una tarea particular.

En el segundo caso, la dificultad de obtener medidas empíricas de la atención aural nos ha hecho decantarnos por métodos no supervisados. Así hemos desarrollado un sistema de atención aural no supervisado basado en métodos bayesianos, el concepto biológico de memoria ecoica o memoria sensorial auditiva y la fusión de información a diversas escalas temporales mediante la utilización de diferentes distancias o divergencias estadísticas. Hemos evaluado el funcionamiento de este sistema sobre tareas de detección de eventos acústicos y analizado su robustez frente a diversas condiciones de ruido ambiental.

Por último, y aunque esta línea queda aún abierta, hemos desarrollado un sistema de detección de saliencia visual basado en la influencia que la saliencia auditiva ejerce en la percepción.

Creemos que además del impacto científico-tecnológico del avance del conocimiento que ha supuesto SAMURAI, el impacto socio-económico puede ser muy elevado ya que hemos identificado aplicaciones en las áreas de salud, seguridad, transporte y turismos a las que ya estamos trabajando en transferir los conocimientos.

Keywords:

Saliency, attention, multimodality, bioinspiration, deep learning, latent topics, exploratory analysis, cognitive computation

Download here the Layman Report

Publications

(Open access in our Institutional Repository: https://e-archivo.uc3m.es/handle/10016/1591)

-

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) K. A. Abdalmalak and A. Gallardo-Antolín, “Enhancement of a text-independent speaker verification system by using feature combination and parallel structure classifiers,” Neural Computing and Applications, vol. 29, iss. 3, pp. 637-651, 2018.

K. A. Abdalmalak and A. Gallardo-Antolín, “Enhancement of a text-independent speaker verification system by using feature combination and parallel structure classifiers,” Neural Computing and Applications, vol. 29, iss. 3, pp. 637-651, 2018.

[Bibtex]@article{Abdalmalak2018, author="Abdalmalak, Kerlos Atia and Gallardo-Antol{\'i}n, Ascensi{\'o}n", title="Enhancement of a text-independent speaker verification system by using feature combination and parallel structure classifiers", journal="Neural Computing and Applications", year="2018", month="Feb", day="01", volume="29", number="3", pages="637--651", issn="1433-3058", doi="10.1007/s00521-016-2470-x", url="https://doi.org/10.1007/s00521-016-2470-x" } -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) J. Ludeña-Choez, R. Quispe-Soncco, and A. Gallardo-Antolín, “Bird sound spectrogram decomposition through Non-Negative Matrix Factorization for the acoustic classification of bird species,” PLOS ONE, vol. 12, iss. 6, pp. 1-20, 2017.

J. Ludeña-Choez, R. Quispe-Soncco, and A. Gallardo-Antolín, “Bird sound spectrogram decomposition through Non-Negative Matrix Factorization for the acoustic classification of bird species,” PLOS ONE, vol. 12, iss. 6, pp. 1-20, 2017.

[Bibtex]@article{Ludena-Choez2017, author = {Lude{\~}na-Choez, Jimmy and Quispe-Soncco, Raisa and Gallardo-Antol{\'i}n, Ascensi{\'o}n}, journal = {PLOS ONE}, publisher = {Public Library of Science}, title = {Bird sound spectrogram decomposition through Non-Negative Matrix Factorization for the acoustic classification of bird species}, year = {2017}, month = {06}, volume = {12}, url = {https://doi.org/10.1371/journal.pone.0179403}, pages = {1-20}, number = {6}, doi = {10.1371/journal.pone.0179403} } -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) F. de-la-Calle-Silos and R. M. Stern, “Synchrony-Based Feature Extraction for Robust Automatic Speech Recognition,” IEEE Signal Processing Letters, vol. 24, iss. 8, pp. 1158-1162, 2017.

F. de-la-Calle-Silos and R. M. Stern, “Synchrony-Based Feature Extraction for Robust Automatic Speech Recognition,” IEEE Signal Processing Letters, vol. 24, iss. 8, pp. 1158-1162, 2017.

[Bibtex]@article{de-la-Calle-Silos2017, author={de-la-Calle-Silos, Fernando and Stern, Richard M.}, journal={IEEE Signal Processing Letters}, title={Synchrony-Based Feature Extraction for Robust Automatic Speech Recognition}, year={2017}, volume={24}, number={8}, pages={1158-1162}, keywords={feature extraction;speech recognition;noise removal;noise suppression;multiple standard speech databases;generalized synchrony detector;putative synchrony;temporal patterns;auditory-nerve activity;feature extraction schemes;automatic speech recognition system robustness enhancement;auditory-nerve firings;temporal pattern model application;robust automatic speech recognition;synchrony-based feature extraction;Feature extraction;Mel frequency cepstral coefficient;Speech recognition;Speech;Robustness;Frequency synchronization;Databases;Auditory modeling;auditory synchrony;feature extraction;physiological modeling;robust speech recognition}, doi={10.1109/LSP.2017.2714192}, ISSN={1070-9908}, month={Aug},} -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) M. Molina-Moreno, I. González-Díaz, and F. Díaz-de-María, “Efficient Scale-Adaptive License Plate Detection System,” IEEE Transactions on Intelligent Transportation Systems, pp. 1-13, 2018.

M. Molina-Moreno, I. González-Díaz, and F. Díaz-de-María, “Efficient Scale-Adaptive License Plate Detection System,” IEEE Transactions on Intelligent Transportation Systems, pp. 1-13, 2018.

[Bibtex]@ARTICLE{Molina-Moreno2018, author={M. Molina-Moreno and I. González-Díaz and F. Díaz-de-María}, journal={IEEE Transactions on Intelligent Transportation Systems}, title={Efficient Scale-Adaptive License Plate Detection System}, year={2018}, volume={}, number={}, pages={1-13}, keywords={Licenses;Detectors;Feature extraction;Deformable models;Lighting;Robustness;Image edge detection;License plate detection;GentleBoost;scale-adaptive part-based model;video surveillance}, doi={10.1109/TITS.2018.2859035}, ISSN={1524-9050}, month={},} -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) I. González-Díaz, “DermaKNet: Incorporating the knowledge of dermatologists to Convolutional Neural Networks for skin lesion diagnosis,” IEEE Journal of Biomedical and Health Informatics, pp. 1-1, 2018.

I. González-Díaz, “DermaKNet: Incorporating the knowledge of dermatologists to Convolutional Neural Networks for skin lesion diagnosis,” IEEE Journal of Biomedical and Health Informatics, pp. 1-1, 2018.

[Bibtex]@article{Gonzalez18, author={I. González-Díaz}, journal={IEEE Journal of Biomedical and Health Informatics}, title={DermaKNet: Incorporating the knowledge of dermatologists to Convolutional Neural Networks for skin lesion diagnosis}, year={2018}, volume={}, number={}, pages={1-1}, keywords={Image segmentation;Informatics;Lesions;Malignant tumors;Skin;Solid modeling;Task analysis;CAD;Convolutional Neural Networks;Dermoscopy;Melanoma;Skin lesion analysis}, doi={10.1109/JBHI.2018.2806962}, ISSN={2168-2194}, month={}, key= {cogmax}} -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) J. López-Labraca, M. Á. Fernández-Torres, I. González-Díaz, F. Díaz-de-María, and Á. Pizarro, “Enriched dermoscopic-structure-based cad system for melanoma diagnosis,” Multimedia Tools and Applications, vol. 77, iss. 10, pp. 12171-12202, 2018.

J. López-Labraca, M. Á. Fernández-Torres, I. González-Díaz, F. Díaz-de-María, and Á. Pizarro, “Enriched dermoscopic-structure-based cad system for melanoma diagnosis,” Multimedia Tools and Applications, vol. 77, iss. 10, pp. 12171-12202, 2018.

[Bibtex]@ARTICLE{Lopez-Labraca2018, author="L{\'o}pez-Labraca, Javier and Fern{\'a}ndez-Torres, Miguel {\'A}ngel and Gonz{\'a}lez-D{\'i}az, Iv{\'a}n and D{\'i}az-de-Mar{\'i}a, Fernando and Pizarro, {\'A}ngel", journal={Multimedia Tools and Applications}, title={Enriched dermoscopic-structure-based cad system for melanoma diagnosis}, year={2018}, volume={77}, number={10}, pages={12171-12202}, keywords={Computer-Aided Diagnosis, melanoma diagnosis, enriched diagnosis, dermoscopic structures, Bayesian fusion}, doi={10.1007/s11042-017-4879-3}, ISSN={1573-7721}, month={May},} -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) E. Martínez-Enríquez, J. Cid-Sueiro, F. Díaz-de-María, and A. Ortega, “Optimized Update/Prediction Assignment for Lifting Transforms on Graphs,” IEEE Transactions on Signal Processing, vol. 66, iss. 8, pp. 2098-2111, 2018.

E. Martínez-Enríquez, J. Cid-Sueiro, F. Díaz-de-María, and A. Ortega, “Optimized Update/Prediction Assignment for Lifting Transforms on Graphs,” IEEE Transactions on Signal Processing, vol. 66, iss. 8, pp. 2098-2111, 2018.

[Bibtex]@ARTICLE{Martinez-Enriquez2018a, author={E. Martínez-Enríquez and J. Cid-Sueiro and F. Díaz-de-María and A. Ortega}, journal={IEEE Transactions on Signal Processing}, title={Optimized Update/Prediction Assignment for Lifting Transforms on Graphs}, year={2018}, volume={66}, number={8}, pages={2098-2111}, keywords={feature extraction;graph theory;optimisation;transforms;video signal processing;randomly generated graph signals;optimal U/P assignment;update/prediction assignment problem;good bipartition;feature extraction;transformations;optimized update/prediction assignment;Transforms;Indexes;Correlation;Topology;Compaction;Manganese;Noise reduction;Lifting transform;Graphs;$\mathcal{U}/\mathcal{P}$assignment;splitting;graph bipartition}, doi={10.1109/TSP.2018.2802465}, ISSN={1053-587X}, month={April},} -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) E. Martínez-Enríquez, J. Cid-Sueiro, F. Díaz-de-María, and A. Ortega, “Directional Transforms for Video Coding Based on Lifting on Graphs,” IEEE Transactions on Circuits and Systems for Video Technology, vol. 28, iss. 4, pp. 933-946, 2018.

E. Martínez-Enríquez, J. Cid-Sueiro, F. Díaz-de-María, and A. Ortega, “Directional Transforms for Video Coding Based on Lifting on Graphs,” IEEE Transactions on Circuits and Systems for Video Technology, vol. 28, iss. 4, pp. 933-946, 2018.

[Bibtex]@ARTICLE{Martinez-Enriquez2018b, author={E. Martínez-Enríquez and J. Cid-Sueiro and F. Díaz-de-María and A. Ortega}, journal={IEEE Transactions on Circuits and Systems for Video Technology}, title={Directional Transforms for Video Coding Based on Lifting on Graphs}, year={2018}, volume={28}, number={4}, pages={933-946}, keywords={data compression;filtering theory;graph theory;image filtering;image motion analysis;video coding;wavelet transforms;video coding;lifting transforms;video signal;temporal motion-related pixels;nonsimilar pixels;filtering operations;linked nodes;wavelet transforms;complete video encoder;temporal filtering wavelet;graph splitting;graph construction;H.264-AVC;3D directional transform;Wavelet transforms;Multiresolution analysis;Video coding;Encoding;Motion estimation;Entropy;Directional transforms;lifting transform;signal processing on graphs;video coding}, doi={10.1109/TCSVT.2016.2633418}, ISSN={1051-8215}, month={April},} -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) J. L. González-de-Suso, E. Martínez-Enríquez, and F. Díaz-de-María, “Adaptive Lagrange multiplier estimation algorithm in HEVC,” Signal Processing: Image Communication, vol. 56, pp. 40-51, 2017.

J. L. González-de-Suso, E. Martínez-Enríquez, and F. Díaz-de-María, “Adaptive Lagrange multiplier estimation algorithm in HEVC,” Signal Processing: Image Communication, vol. 56, pp. 40-51, 2017.

[Bibtex]@article{GONZALEZDESUSO2017, title = "Adaptive Lagrange multiplier estimation algorithm in HEVC", journal = "Signal Processing: Image Communication", volume = "56", pages = "40 - 51", year = "2017", issn = "0923-5965", doi = "https://doi.org/10.1016/j.image.2017.04.010", url = "http://www.sciencedirect.com/science/article/pii/S0923596517300760", author = "José Luis González-de-Suso and Eduardo Martínez-Enríquez and Fernando Díaz-de-María", keywords = "HEVC, Motion estimation, Rate-distortion optimization, Source coding, Video coding", month={August}, } -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) A. Jiménez-Moreno, E. Martínez-Enríquez, and F. Díaz-de-María, “Bayesian adaptive algorithm for fast coding unit decision in the High Efficiency Video Coding (HEVC) standard,” Signal Processing: Image Communication, vol. 56, pp. 1-11, 2017.

A. Jiménez-Moreno, E. Martínez-Enríquez, and F. Díaz-de-María, “Bayesian adaptive algorithm for fast coding unit decision in the High Efficiency Video Coding (HEVC) standard,” Signal Processing: Image Communication, vol. 56, pp. 1-11, 2017.

[Bibtex]@article{JIMENEZMORENO2017, title = "Bayesian adaptive algorithm for fast coding unit decision in the High Efficiency Video Coding (HEVC) standard", journal = "Signal Processing: Image Communication", volume = "56", pages = "1 - 11", year = "2017", issn = "0923-5965", doi = "https://doi.org/10.1016/j.image.2017.04.004", url = "http://www.sciencedirect.com/science/article/pii/S092359651730070X", author = "Amaya Jiménez-Moreno and Eduardo Martínez-Enríquez and Fernando Díaz-de-María", month={August}, } - I. González-Díaz, V. Buso, and J. Benois-Pineau, “Perceptual modeling in the problem of active object recognition in visual scenes,” Pattern Recognition, vol. 56, iss. Supplement C, pp. 129-141, 2016.

[Bibtex]@article{Gonzalez16, title = "Perceptual modeling in the problem of active object recognition in visual scenes", journal = "Pattern Recognition", volume = "56", number = "Supplement C", pages = "129 - 141", year = "2016", issn = "0031-3203", author = "Iv{\'{a}}n Gonz{\'{a}}lez{-}D{\'{i}}az and Vincent Buso and Jenny Benois-Pineau", keywords = "Perceptual modeling, Visual saliency, Active object recognition, Foveal and peripheral pathways" } -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) A. Hernández-García, F. Fernández-Martínez, and F. Díaz-de-María, “Comparing visual descriptors and automatic rating strategies for video aesthetics prediction,” Signal Processing: Image Communication, vol. 47, pp. 280-288, 2016.

A. Hernández-García, F. Fernández-Martínez, and F. Díaz-de-María, “Comparing visual descriptors and automatic rating strategies for video aesthetics prediction,” Signal Processing: Image Communication, vol. 47, pp. 280-288, 2016.

[Bibtex]@article{HERNANDEZGARCIA2016280, title = "Comparing visual descriptors and automatic rating strategies for video aesthetics prediction", journal = "Signal Processing: Image Communication", volume = "47", pages = "280 - 288", year = "2016", issn = "0923-5965", doi = "https://doi.org/10.1016/j.image.2016.07.004", url = "http://www.sciencedirect.com/science/article/pii/S0923596516301035", author = "A. Hernández-García and F. Fernández-Martínez and F. Díaz-de-María", keywords = "Automatic aesthetics prediction, Image descriptors, Video descriptors, YouTube, Automatic annotation", month={September},} -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) F. J. Valverde-Albacete and C. Peláez-Moreno, “Assessing Information Transmission in Data Transformations with the Channel Multivariate Entropy Triangle,” Entropy, vol. 20, iss. 7, 2018.

F. J. Valverde-Albacete and C. Peláez-Moreno, “Assessing Information Transmission in Data Transformations with the Channel Multivariate Entropy Triangle,” Entropy, vol. 20, iss. 7, 2018.

[Bibtex]@Article{val:pel:18b, AUTHOR = {Valverde-Albacete, Francisco J. and Pel\'aez-Moreno, Carmen}, TITLE = {Assessing Information Transmission in Data Transformations with the Channel Multivariate Entropy Triangle}, JOURNAL = {Entropy}, VOLUME = {20}, YEAR = {2018}, NUMBER = {7}, ARTICLE-NUMBER = {498}, URL = {http://www.mdpi.com/1099-4300/20/7/498}, ISSN = {1099-4300}, ABSTRACT = {Data transformation, e.g., feature transformation and selection, is an integral part of any machine learning procedure. In this paper, we introduce an information-theoretic model and tools to assess the quality of data transformations in machine learning tasks. In an unsupervised fashion, we analyze the transformation of a discrete, multivariate source of information X¯ into a discrete, multivariate sink of information Y¯ related by a distribution PX¯Y¯. The first contribution is a decomposition of the maximal potential entropy of (X¯,Y¯), which we call a balance equation, into its (a) non-transferable, (b) transferable, but not transferred, and (c) transferred parts. Such balance equations can be represented in (de Finetti) entropy diagrams, our second set of contributions. The most important of these, the aggregate channel multivariate entropy triangle, is a visual exploratory tool to assess the effectiveness of multivariate data transformations in transferring information from input to output variables. We also show how these decomposition and balance equations also apply to the entropies of X¯ and Y¯, respectively, and generate entropy triangles for them. As an example, we present the application of these tools to the assessment of information transfer efficiency for Principal Component Analysis and Independent Component Analysis as unsupervised feature transformation and selection procedures in supervised classification tasks.}, DOI = {10.3390/e20070498} } -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) A. Rodríguez-Hidalgo, C. Peláez-Moreno, and A. Gallardo-Antolín, “Echoic log-surprise: A multi-scale scheme for acoustic saliency detection,” Expert Systems with Applications, vol. 114, pp. 255-266, 2018.

A. Rodríguez-Hidalgo, C. Peláez-Moreno, and A. Gallardo-Antolín, “Echoic log-surprise: A multi-scale scheme for acoustic saliency detection,” Expert Systems with Applications, vol. 114, pp. 255-266, 2018.

[Bibtex]@article{rod:pel:gal:18, title = {Echoic log-surprise: A multi-scale scheme for acoustic saliency detection}, journal = {Expert Systems with Applications}, volume = {114}, pages = {255 - 266}, year = {2018}, issn = {0957-4174}, doi = {https://doi.org/10.1016/j.eswa.2018.07.018}, url = {http://www.sciencedirect.com/science/article/pii/S0957417418304330}, author = {Antonio Rodr\'iguez-Hidalgo and Carmen Pel\'aez-Moreno and Ascensi\'on Gallardo-Antol\'in}, keywords = {Acoustic saliency, Echoic memory, Multi-scale, Statistical divergence, Jensen–Shannon, Acoustic Event Detection}, abstract = {Perceptual signals such as acoustic or visual cues carry a massive amount of information. From a human perspective, this problem is solved by means of cognitive mechanisms related to attention. In particular, saliency is a property of particular stimuli that makes them stand from others to allow the brain to take decisions about their relevance in the process of exploring the world. For artificial intelligence systems it is advantageous to mimic these mechanisms. Visual saliency algorithms have been successfully employed in tasks such as medical diagnosis, detection of violent scenes, environment understanding made by robots, etc. In contrast, computational models of the acoustic saliency mechanisms are less extended. In this context, we propose a novel acoustic saliency algorithm to be used by intelligent and expert systems facing tasks such as sound detection and classification, early alarm, surveillance, robotic exploration of the surroundings, among many other applications. This technique, we termed echoic log-surprise, combines an unsupervised statistical approach based on Bayesian log-surprise and the biological concept of echoic or Auditory Sensory Memory. Our algorithm computes several independent log-surprise cues in parallel considering a wide range of memory values, with the aim of leveraging saliency information from different temporal scales. Then, we explore several statistical metrics to combine these multi-scale signals in a single temporal saliency signal including Renyi entropy, Jensen-Shannon divergence, Cramer or Bhattacharyya distances. We have adopted Acoustic Event Detection tasks as adequate proxies to test its performance. Results show that the proposed echoic log-surprise method outperforms classical acoustic detection techniques commonly deployed in intelligent and expert systems, such as energy thresholding or voice activity detection, and it also achieves better results than some other state-of-the-art acoustic saliency algorithms, such as Kalinli’s and conventional log-surprise.} } -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) M. Á. Fernández-Torres, I. González-Díaz, and F. Díaz-de-María, “A probabilistic topic approach for context-aware visual attention modeling,” in 2016 14th International Workshop on Content-Based Multimedia Indexing (CBMI), 2016, pp. 1-6.

M. Á. Fernández-Torres, I. González-Díaz, and F. Díaz-de-María, “A probabilistic topic approach for context-aware visual attention modeling,” in 2016 14th International Workshop on Content-Based Multimedia Indexing (CBMI), 2016, pp. 1-6.

[Bibtex]@inproceedings{fer16, author={M. Á. Fernández-Torres and I. González-Díaz and F. Díaz-de-María}, booktitle={2016 14th International Workshop on Content-Based Multimedia Indexing (CBMI)}, title={A probabilistic topic approach for context-aware visual attention modeling}, year={2016}, volume={}, number={}, pages={1-6}, keywords={image fusion;probability;ubiquitous computing;video signal processing;complex visual processes;context-aware visual attention modeling;generic fusion schemes;image frames;probabilistic topic approach;video category;video frames;Adaptation models;Computational modeling;Context modeling;Feature extraction;Image color analysis;Probabilistic logic;Visualization}, doi={10.1109/CBMI.2016.7500272}, ISSN={}, month={June}, key = {samurai}} -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) J. Ludeña-Choez and A. Gallardo-Antolín, “Acoustic Event Classification using spectral band selection and Non-Negative Matrix Factorization-based features,” Expert Systems with Applications, vol. 46, pp. 77-86, 2016.

J. Ludeña-Choez and A. Gallardo-Antolín, “Acoustic Event Classification using spectral band selection and Non-Negative Matrix Factorization-based features,” Expert Systems with Applications, vol. 46, pp. 77-86, 2016.

[Bibtex]@article{lud16, title = "Acoustic Event Classification using spectral band selection and Non-Negative Matrix Factorization-based features", journal = "Expert Systems with Applications", volume = "46", pages = "77 - 86", year = "2016", issn = "0957-4174", doi = "https://doi.org/10.1016/j.eswa.2015.10.018", url = "http://www.sciencedirect.com/science/article/pii/S0957417415007137", author = "Jimmy Ludeña-Choez and Ascensión Gallardo-Antolín", keywords = "Acoustic Event Classification, Feature extraction, Temporal feature integration, Feature selection, Mutual information, Non-Negative Matrix Factorization", key = {samurai} } -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) A. Jiménez-Moreno, E. Martínez-Enríquez, and F. Díaz-de-María, “Complexity Control Based on a Fast Coding Unit Decision Method in the HEVC Video Coding Standard,” IEEE Transactions on Multimedia, vol. 18, iss. 4, pp. 563-575, 2016.

A. Jiménez-Moreno, E. Martínez-Enríquez, and F. Díaz-de-María, “Complexity Control Based on a Fast Coding Unit Decision Method in the HEVC Video Coding Standard,” IEEE Transactions on Multimedia, vol. 18, iss. 4, pp. 563-575, 2016.

[Bibtex]@article{jim16, author={A. Jiménez-Moreno and E. Martínez-Enríquez and F. Díaz-de-María}, journal={IEEE Transactions on Multimedia}, title={Complexity Control Based on a Fast Coding Unit Decision Method in the HEVC Video Coding Standard}, year={2016}, volume={18}, number={4}, pages={563-575}, keywords={computational complexity;data structures;video coding;CC algorithm;HEVC video coding standard;coding complexity;coding tool;computational complexity control algorithm;encoding configuration;encoding time;fast coding unit decision method;flexible data representation;hierarchical approach;prediction unit;target complexity reduction method;transform unit;video content;Complexity theory;Encoding;Proposals;Standards;Streaming media;Complexity control;Complexity control (CC);HEVC;fast coding unit decision;high efficiency video coding (HEVC);on the fly estimation}, doi={10.1109/TMM.2016.2524995}, ISSN={1520-9210}, month={April}, key = {samurai}} -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) G. I. Díaz, “DermaKNet: Incorporating the knowledge of dermatologists to Convolutional Neural Networks for skin lesion diagnosis,” IEEE Journal of Biomedical and Health Informatics, iss. 99, pp. 1-1, 2018.

G. I. Díaz, “DermaKNet: Incorporating the knowledge of dermatologists to Convolutional Neural Networks for skin lesion diagnosis,” IEEE Journal of Biomedical and Health Informatics, iss. 99, pp. 1-1, 2018.

[Bibtex]@article{Gonzalez18, author={I. González Díaz}, journal={IEEE Journal of Biomedical and Health Informatics}, title={DermaKNet: Incorporating the knowledge of dermatologists to Convolutional Neural Networks for skin lesion diagnosis}, year={2018}, volume={}, number={99}, pages={1-1}, keywords={Image segmentation;Informatics;Lesions;Malignant tumors;Skin;Solid modeling;Task analysis;CAD;Convolutional Neural Networks;Dermoscopy;Melanoma;Skin lesion analysis}, doi={10.1109/JBHI.2018.2806962}, ISSN={2168-2194}, month={}, key= {cogmax}} -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) M. de-Frutos-Lopez, J. Luis González-de-Suso, S. Sanz-Rodriguez, C. Peláez-Moreno, and F. Díaz-de-María, “Two-level sliding-window VBR control algorithm for video on demand streaming,” Signal Processing-Image Communication, vol. 36, pp. 1-13, 2015.

M. de-Frutos-Lopez, J. Luis González-de-Suso, S. Sanz-Rodriguez, C. Peláez-Moreno, and F. Díaz-de-María, “Two-level sliding-window VBR control algorithm for video on demand streaming,” Signal Processing-Image Communication, vol. 36, pp. 1-13, 2015.

[Bibtex]@article{RN412, author = {de-Frutos-Lopez, Manuel and Luis González-de-Suso, José and Sanz-Rodriguez, Sergio and Peláez-Moreno, Carmen and Díaz-de-María, Fernando}, title = {Two-level sliding-window VBR control algorithm for video on demand streaming}, journal = {Signal Processing-Image Communication}, volume = {36}, pages = {1-13}, ISSN = {0923-5965}, DOI = {10.1016/j.image.2015.05.004}, url = {://WOS:000360874700001}, year = {2015}, type = {Journal Article}, key = {samurai} } -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) F. J. ~. Valverde-Albacete, J.M.~González-Calabozo, A. Peñas, and C. Peláez-Moreno, “Supporting scientific knowledge discovery with extended, generalized Formal Concept Analysis,” Expert Systems with Applications, vol. 44, pp. 198-216, 2016.

F. J. ~. Valverde-Albacete, J.M.~González-Calabozo, A. Peñas, and C. Peláez-Moreno, “Supporting scientific knowledge discovery with extended, generalized Formal Concept Analysis,” Expert Systems with Applications, vol. 44, pp. 198-216, 2016.

[Bibtex]@article{val:gon:pen:pel:15old, Author = {Francisco J.~ Valverde-Albacete and J.M.~Gonz\'alez-Calabozo and A. Pe\~nas and Carmen Pel\'aez-Moreno}, title = {Supporting scientific knowledge discovery with extended, generalized Formal Concept Analysis}, journal = {Expert Systems with Applications}, volume = {44}, pages = {198-216}, ISSN = {0957-4174}, DOI = {10.1016/j.eswa.2015.09.022}, url = {://WOS:000365051500019}, year = {2016}, type = {Journal Article} }

Conferences

- E. Rituerto-González, A. Gallardo-Antolín, and C. Peláez-Moreno, “Speaker Recognition under Stress Conditions,” in Proceedings of the X Jornadas en Tecnología del Habla and V Iberian SLTech Workshop (IberSPEECH 2018), 2018, pp. 15-19.

[Bibtex]@inproceedings{Rituerto-Gonzalez2018, author={Rituerto-Gonz{\'a}lez, Esther and Gallardo-Antol{\'i}n, Ascensi{\'o}n and Pel{\'a}ez-Moreno, C.}, booktitle={Proceedings of the X Jornadas en Tecnología del Habla and V Iberian SLTech Workshop (IberSPEECH 2018)}, title={Speaker Recognition under Stress Conditions}, year={2018}, volume={}, number={}, pages={15-19}, doi={}, ISSN={}, month={Nov},} - C. Jiménez-Recio, A. Zlotnik, A. Gallardo-Antolín, J. M. Montero, and J. C. Martínez-Castrillo, “Prediction of the Degree of Parkinson’s Condition Using Recordings of Patients’ Voices,” in Proceedings of the Ninth International Conference on Soft Computing and Pattern Recognition (SoCPaR 2017), Cham, 2018, pp. 120-129.

[Bibtex]@inproceedings{Jimenez-Recio2018, author="Jim{\'e}nez-Recio, Clara and Zlotnik, Alexander and Gallardo-Antol{\'i}n, Ascensi{\'o}n and Montero, Juan M. and Mart{\'i}nez-Castrillo, Juan Carlos", editor="Abraham, Ajith and Haqiq, Abdelkrim and Muda, Azah Kamilah and Gandhi, Niketa", title="Prediction of the Degree of Parkinson's Condition Using Recordings of Patients' Voices", booktitle="Proceedings of the Ninth International Conference on Soft Computing and Pattern Recognition (SoCPaR 2017)", year="2018", publisher="Springer International Publishing", address="Cham", pages="120--129", isbn="978-3-319-76357-6" } - A. Rodriíguez-Hidalgo, A. Gallardo-Antolín, and C. Peláez-Moreno, “Towards aural saliency detection with logarithmic Bayesian Surprise under different spectro-temporal representations,” in Proceedings of the IX Jornadas en Tecnología del Habla and V Iberian SLTech Workshop (IberSPEECH 2016), 2016, pp. 99-108.

[Bibtex]@inproceedings{Rodriguez-Hidalgo2016, author={Rodri{\'i}guez-Hidalgo, Antonio and Gallardo-Antol{\'i}n, Ascensi{\'o}n and Pel{\'a}ez-Moreno, C.}, booktitle={Proceedings of the IX Jornadas en Tecnología del Habla and V Iberian SLTech Workshop (IberSPEECH 2016)}, title={Towards aural saliency detection with logarithmic Bayesian Surprise under different spectro-temporal representations}, year={2016}, volume={}, number={}, pages={99-108}, doi={}, ISSN={}, month={Nov},} - J. Ludeña-Choez and A. Gallardo-Antolín, “Non-negative Matrix Factorization Applications to Speech Technologies,” in Proceedings of the IX Jornadas en Tecnología del Habla and V Iberian SLTech Workshop (IberSPEECH 2016), 2016, pp. 339-348.

[Bibtex]@inproceedings{Ludena-Choez2016, author={Lude{\~}na-Choez, Jimmy and Gallardo-Antol{\'i}n, Ascensi{\'o}n}, booktitle={Proceedings of the IX Jornadas en Tecnología del Habla and V Iberian SLTech Workshop (IberSPEECH 2016)}, title={Non-negative Matrix Factorization Applications to Speech Technologies}, year={2016}, volume={}, number={}, pages={339-348}, doi={}, ISSN={}, month={Nov},} -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) T. Martínez-Cortés, I. González-Díaz, and F. Díaz-de-María, “Automatic Learning of Image Representations Combining Content and Metadata,” in 2018 25th IEEE International Conference on Image Processing (ICIP), 2018, pp. 1972-1976.

T. Martínez-Cortés, I. González-Díaz, and F. Díaz-de-María, “Automatic Learning of Image Representations Combining Content and Metadata,” in 2018 25th IEEE International Conference on Image Processing (ICIP), 2018, pp. 1972-1976.

[Bibtex]@INPROCEEDINGS{Martinez-Cortes2018, author={T. Martínez-Cortés and I. González-Díaz and F. Díaz-de-María}, booktitle={2018 25th IEEE International Conference on Image Processing (ICIP)}, title={Automatic Learning of Image Representations Combining Content and Metadata}, year={2018}, volume={}, number={}, pages={1972-1976}, keywords={feedforward neural nets;image representation;learning (artificial intelligence);meta data;automatic training framework;image visual contents;image descriptors;location-related information;automatic learning;image representation;deep convolutional neural networks;image visual content;metadata;landmark discovery task;content-based image representation;visual-related information;loss-function;Visualization;Metadata;Task analysis;Training;Urban areas;Global Positioning System;Computational modeling;CNN;metadata;loss function;weak labels}, doi={10.1109/ICIP.2018.8451566}, ISSN={2381-8549}, month={Oct},} -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) M. Fernández-Torres, I. González-Díaz, and F. Díaz-de-María, “A probabilistic topic approach for context-aware visual attention modeling,” in 2016 14th International Workshop on Content-Based Multimedia Indexing (CBMI), 2016, pp. 1-6.

M. Fernández-Torres, I. González-Díaz, and F. Díaz-de-María, “A probabilistic topic approach for context-aware visual attention modeling,” in 2016 14th International Workshop on Content-Based Multimedia Indexing (CBMI), 2016, pp. 1-6.

[Bibtex]@INPROCEEDINGS{Fernandez-Torres2016, author={M. Fernández-Torres and I. González-Díaz and F. Díaz-de-María}, booktitle={2016 14th International Workshop on Content-Based Multimedia Indexing (CBMI)}, title={A probabilistic topic approach for context-aware visual attention modeling}, year={2016}, volume={}, number={}, pages={1-6}, keywords={image fusion;probability;ubiquitous computing;video signal processing;probabilistic topic approach;context-aware visual attention modeling;complex visual processes;video frames;image frames;generic fusion schemes;video category;Visualization;Feature extraction;Probabilistic logic;Context modeling;Image color analysis;Computational modeling;Adaptation models}, doi={10.1109/CBMI.2016.7500272}, ISSN={1949-3991}, month={June},} - F. J. Valverde-Albacete, C. Peláez-Moreno, I. P. Cabrera, P. Cordero, and M. Ojeda-Aciego, “A Data Analysis Application of Formal Independence Analysis,” in Concept Lattices and their Applications (CLA 2018), , 2018, pp. 1-12.

[Bibtex]@incollection{val:pel:cab:cor:oje:18b, Author = {Valverde-Albacete, Francisco J and Pel{\'a}ez-Moreno, Carmen and Cabrera, Inma P and Cordero, P and Ojeda-Aciego, Manuel}, Booktitle = {Concept Lattices and their Applications (CLA 2018)}, Date-Added = {2018-05-08 07:39:20 +0000}, Date-Modified = {2018-05-08 07:39:20 +0000}, Pages = {1--12}, Title = {{A Data Analysis Application of Formal Independence Analysis}}, Year = {2018}} -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) A. Rodríguez-Hidalgo, C. Peláez-Moreno, and A. Gallardo-Antolín, “Towards multimodal saliency detection: An enhancement of audio-visual correlation estimation,” in 2017 IEEE 16th International Conference on Cognitive Informatics Cognitive Computing (ICCI*CC), 2017, pp. 438-443.

A. Rodríguez-Hidalgo, C. Peláez-Moreno, and A. Gallardo-Antolín, “Towards multimodal saliency detection: An enhancement of audio-visual correlation estimation,” in 2017 IEEE 16th International Conference on Cognitive Informatics Cognitive Computing (ICCI*CC), 2017, pp. 438-443.

[Bibtex]@INPROCEEDINGS{rod:pel:gal:17, author={A. Rodríguez-Hidalgo and C. Peláez-Moreno and A. Gallardo-Antolín}, booktitle={2017 IEEE 16th International Conference on Cognitive Informatics Cognitive Computing (ICCI*CC)}, title={Towards multimodal saliency detection: An enhancement of audio-visual correlation estimation}, year={2017}, volume={}, number={}, pages={438-443}, keywords={acoustic signal processing;audio signal processing;audio-visual systems;biomedical optical imaging;feature extraction;image sequences;motion estimation;object detection;video signal processing;multimodal saliency detection;audio-visual correlation estimation;visual saliency detection;acoustic inconsistency;visual motion estimation;auditory saliency algorithms;motion estimation procedure;acoustic saliency;eye-tracker fixations;auditory cue;optical flow;Visualization;Acoustics;Videos;Correlation;Proposals;Motion estimation;Estimation;Index Terms;saliency;acoustic saliency;multimodal saliency;audiovisual correlation;cognitive process model}, doi={10.1109/ICCI-CC.2017.8109785}, ISSN={}, month={July},} - F. J. Valverde-Albacete and C. Peláez-Moreno, “On the Relation between Semifield-Valued FCA and the Idempotent Singular Value Decomposition,” in 2018 IEEE International Conference on Fuzzy Systems (FUZZ-IEEE), 2018, pp. 1-8.

[Bibtex]@inproceedings{val:pel:18e, title={On the Relation between Semifield-Valued FCA and the Idempotent Singular Value Decomposition}, author={Francisco J. Valverde-Albacete and Carmen Pel{\'a}ez-Moreno}, booktitle={2018 IEEE International Conference on Fuzzy Systems (FUZZ-IEEE)}, year={2018}, pages={1-8} } - F. J. Valverde-Albacete and C. Peláez-Moreno, “Entropic Evaluation of Classification. A hands-on, get-dirty introduction,” in International Joint Conference on Neural Networks (IJCNN’18 at WCCI 2018), 2018.

[Bibtex]@inproceedings{val:pel:18d, Author = {Valverde-Albacete, Francisco J. and Carmen Pel\'aez-Moreno}, Booktitle = {International Joint Conference on Neural Networks (IJCNN'18 at WCCI 2018)}, Date-Added = {2018-05-08 09:28:45 +0000}, Date-Modified = {2018-05-08 09:39:26 +0000}, Title = {Entropic Evaluation of Classification. A hands-on, get-dirty introduction}, Year = {2018}, Bdsk-Url-1 = {http://www.ecomp.poli.br/~wcci2018/ijcnn-tutorials/#IJCNN1_04}} - A. Zlotnik, J. M. M. Martínez, R. S. S. Hernández, and A. Gallardo-Antolín, “Random forest-based prediction of Parkinson’s disease progression using acoustic, ASR and intelligibility features,” in 16th Annual Conference of the International Speech Communication Association (INTERSPEECH 2015), 2015, pp. 503-507.

[Bibtex]@inproceedings{zlo15, booktitle = {16th Annual Conference of the International Speech Communication Association (INTERSPEECH 2015)}, title = {Random forest-based prediction of Parkinson's disease progression using acoustic, ASR and intelligibility features}, author = {Alexander Zlotnik and Juan Manuel Montero Mart{\'i}nez and Rub{\'e}n San Segundo Hern{\'a}ndez and Ascensi{\'o}n Gallardo-Antol{\'i}n}, year = {2015}, pages = {503--507}, keywords = {Random forest, regression, Parkinson?s disease, ASR features, intelligibility}, url = {http://oa.upm.es/42002/}, abstract = {The Interspeech ComParE 2015 PC Sub-Challenge consists of automatically determining the degree of Parkinson?s condition using exclusively the patient?s voice. In this paper, we face this problem as a regression task and in order to succeed, we propose the use of an ensemble learning method, Random Forest (RF), in combination with features of different nature: acoustic characteristics, features derived from the output of an Automatic Speech Recognition system (ASR) and non-intrusive intelligibility measures. The system outperforms the baseline results achieving a relative improvement higher than 19\% in the development set.}, key ={samurai} } - F. de-la-Calle-Silos, F. J. Valverde-Albacete, A. Gallardo-Antolín, and C. Peláez-Moreno, Preliminary experiments on the robustness of biologically motivated features for DNN-based ASR, , 2015.

[Bibtex]@book{RN415, author = {de-la-Calle-Silos, F. and Valverde-Albacete, Francisco J. and Gallardo-Antolín, A. and Peláez-Moreno, C.}, title = {Preliminary experiments on the robustness of biologically motivated features for {DNN-based ASR}}, series = {2015 4th International Work Conference on Bioinspired Intelligence}, pages = {169-175}, ISBN = {978-1-4673-7846-8}, url = {://WOS:000380501500026}, year = {2015}, type = {Book}, key = {samurai} } - V. Buso, I. González-Díaz, and J. Benois-Pineau, “OBJECT RECOGNITION WITH TOP-DOWN VISUAL ATTENTION MODELING FOR BEHAVIORAL STUDIES,” in 2015IEEE International Conference on Image Processing, , 2015, pp. 4431-4435.

[Bibtex]@inbook{RN416, author = {Buso, Vincent and González-Díaz, Ivan and Benois-Pineau, Jenny }, title = {OBJECT RECOGNITION WITH TOP-DOWN VISUAL ATTENTION MODELING FOR BEHAVIORAL STUDIES}, booktitle = {2015{IEEE} International Conference on Image Processing}, series = {IEEE International Conference on Image Processing ICIP}, pages = {4431-4435}, ISBN = {978-1-4799-8339-1}, url = {://WOS:000371977804114}, year = {2015}, type = {Book Section}, key = {samurai} } -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) F. de-la-Calle-Silos, A. Gallardo-Antolin, and C. Peláez-Moreno, “An Analysis of Deep Neural Networks in Broad Phonetic Classes for Noisy Speech Recognition,” in Advances in Speech and Language Technologies for Iberian Languages, Iberspeech 2016, A. Abad, A. Ortega, A. Teixeira, C. G. Mateo, C. D. M. Hinarejos, F. Perdigao, F. Batista, and N. Mamede, Eds., , 2016, vol. 10077, pp. 87-96.

F. de-la-Calle-Silos, A. Gallardo-Antolin, and C. Peláez-Moreno, “An Analysis of Deep Neural Networks in Broad Phonetic Classes for Noisy Speech Recognition,” in Advances in Speech and Language Technologies for Iberian Languages, Iberspeech 2016, A. Abad, A. Ortega, A. Teixeira, C. G. Mateo, C. D. M. Hinarejos, F. Perdigao, F. Batista, and N. Mamede, Eds., , 2016, vol. 10077, pp. 87-96.

[Bibtex]@inbook{RN407, author = {de-la-Calle-Silos, F. and Gallardo-Antolin, A. and Peláez-Moreno, C.}, title = {An Analysis of Deep Neural Networks in Broad Phonetic Classes for Noisy Speech Recognition}, booktitle = {Advances in Speech and Language Technologies for Iberian Languages, Iberspeech 2016}, editor = {Abad, A. and Ortega, A. and Teixeira, A. and Mateo, C. G. and Hinarejos, C. D. M. and Perdigao, F. and Batista, F. and Mamede, N.}, series = {Lecture Notes in Computer Science}, volume = {10077}, pages = {87-96}, ISBN = {978-3-319-49169-1; 978-3-319-49168-4}, DOI = {10.1007/978-3-319-49169-1_9}, url = {://WOS:000389797600009}, year = {2016}, type = {Book Section} } -

![[DOI]](http://gpm.webs.tsc.uc3m.es/wp-content/plugins/papercite/img/external.png) F. J. Valverde-Albacete and C. Peláez-Moreno, “The Linear Algebra in Formal Concept Analysis over Idempotent Semifields,” in Formal Concept Analysis, J. Baixeries, C. Sacarea, and M. OjedaAciego, Eds., , 2015, vol. 9113, pp. 97-113.

F. J. Valverde-Albacete and C. Peláez-Moreno, “The Linear Algebra in Formal Concept Analysis over Idempotent Semifields,” in Formal Concept Analysis, J. Baixeries, C. Sacarea, and M. OjedaAciego, Eds., , 2015, vol. 9113, pp. 97-113.

[Bibtex]@inbook{RN417, author = {Valverde-Albacete, Francisco J. and Peláez-Moreno, Carmen}, title = {The Linear Algebra in Formal Concept Analysis over Idempotent Semifields}, booktitle = {Formal Concept Analysis}, editor = {Baixeries, J. and Sacarea, C. and OjedaAciego, M.}, series = {Lecture Notes in Artificial Intelligence}, volume = {9113}, pages = {97-113}, ISBN = {978-3-319-19545-2; 978-3-319-19544-5}, DOI = {10.1007/978-3-319-19545-2_6}, url = {://WOS:000364534600006}, year = {2015}, type = {Book Section}, key = {samurai} }

PhDThesis

- J. D. Ludeña-Choez, “Contribuciones a la Aplicación de la Factorización de Matrices No Negativas a las Tecnologías del Habla,” PhD Thesis, 2015.

[Bibtex]@phdthesis{tesis1, author = {Jimmy D. Ludeña-Choez}, title = {Contribuciones a la Aplicación de la Factorización de Matrices No Negativas a las Tecnologías del Habla}, school = {Escuela Politécnica Superior, Universidad Carlos III de Madrid.}, year = 2015, month = 4 } - J. M. González-Calabozo, “Análisis exploratorio de datos de expresión genómica mediante el análisis en conceptos formales.,” PhD Thesis, 2016.

[Bibtex]@phdthesis{tesis2, author = {José María González-Calabozo}, title = {Análisis exploratorio de datos de expresión genómica mediante el análisis en conceptos formales.}, school = {Escuela Politécnica Superior, Universidad Carlos III de Madrid.}, year = 2016, month = 2, note = {An optional note} } - J. L. González-de-Suso-Molinero, “Contributions to the Solution of the Rate-Distortion Optimization Problem in Video Coding,” PhD Thesis, 2016.

[Bibtex]@phdthesis{tesis3, author = {José Luis González-de-Suso-Molinero}, title = {Contributions to the Solution of the Rate-Distortion Optimization Problem in Video Coding}, school = {Escuela Politécnica Superior, Universidad Carlos III de Madrid.}, year = 2016, month =7 , note = {An optional note} } - A. Jiménez-Moreno, “Algorithms for complexity management in video coding,” PhD Thesis, 2016.

[Bibtex]@phdthesis{tesis4, author = {Amaya Jiménez-Moreno}, title = {Algorithms for complexity management in video coding}, school = {Escuela Politécnica Superior, Universidad Carlos III de Madrid.}, year = 2016, month = 9, note = {An optional note} } - F. de la Calle-Silos, “Bio-Motivated Features and Deep Learning for Robust Speech Recognition,” PhD Thesis, 2017.

[Bibtex]@phdthesis{tesis5, author = {Fernando de la Calle-Silos}, title = {Bio-Motivated Features and Deep Learning for Robust Speech Recognition}, school = {Escuela Politécnica Superior, Universidad Carlos III de Madrid.}, year = 2017, month = 9, note = {An optional note} } - M. Á. Fernández-Torres, “Hierarchical representations for spatio-temporal visual attention modeling and understanding,” PhD Thesis, 2019.

[Bibtex]@phdthesis{tesis6, author = {Miguel Ángel Fernández-Torres}, title = {Hierarchical representations for spatio-temporal visual attention modeling and understanding}, school = {Escuela Politécnica Superior, Universidad Carlos III de Madrid.}, year = 2019, month = 2, note = {An optional note} }

Funded by:

MINECO (Ministry of Economy and Competitiveness)

TEC2014-53390-P (Convocatoria 2014 de Proyectos de I+D del Programa Estatal de Fomento de la Investigación Científica y Técnica de Excelencia)

Jan. 2015 – Dec. 2018

Contact Persons

Ascensión Gallardo-Antolín (gallardo at tsc.uc3m.es)

Carmen Peláez-Moreno (carmen at tsc.uc3m.es)